The Pentagon has hit a critical impasse with Anthropic, as the AI company’s CEO, Dario Amodei, issued a stark ultimatum highlighting his ethical concerns regarding the government’s unchecked use of artificial intelligence. Amodei's repeated warnings about the potential dangers of military AI applications have put pressure on the Biden administration to reconsider its approach to integrating AI technologies into defense operations. This situation underscores a rapidly escalating crisis at the intersection of national security and ethics in AI utilization.

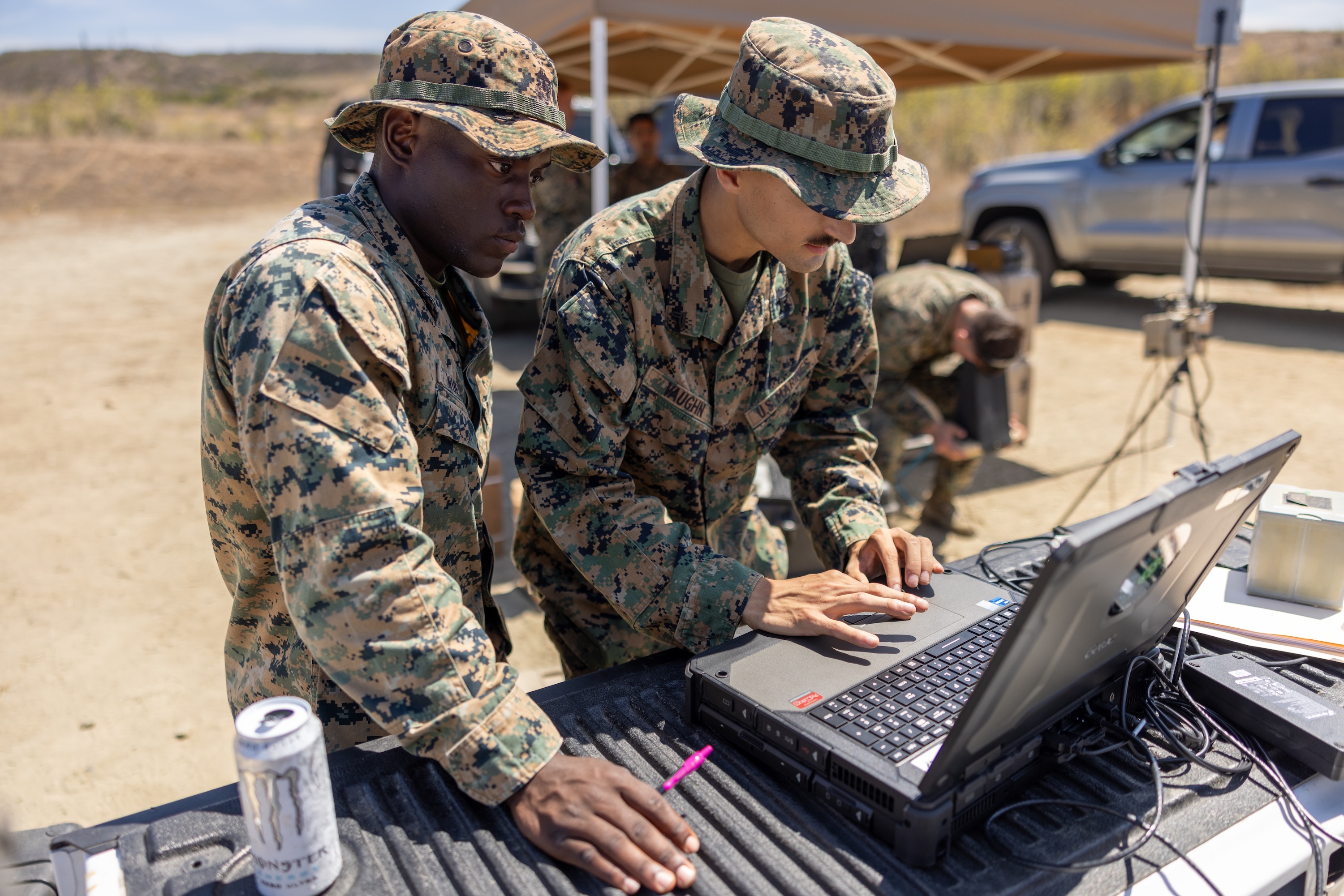

The backdrop of this confrontation is rooted in the Pentagon’s aggressive push to integrate AI capabilities across its military branches, aiming for an operational edge over adversaries. Since the 2018 National Defense Strategy, which amplified the U.S. military's focus on technological superiority, the Department of Defense has invested billions into AI initiatives. However, discussions around the ethical implications, particularly regarding autonomous weapons, have remained contentious, revealing divisions not only within the defense establishment but also in the technological community.

The ramifications of Amodei's concerns are significant, exposing vulnerabilities in the Pentagon’s current strategy. The potential backlash from AI developers could impede the U.S. military's access to cutting-edge technologies, thereby compromising military preparedness. Moreover, if left unaddressed, the ethical quandaries surrounding AI may ignite public distrust and resistance against military applications of advanced technologies, potentially limiting future innovations.

Key actors in this evolving situation include not just the Pentagon, but also major tech companies and AI researchers who fear for the implications of their technologies in military contexts. Amodei's motivations appear to stem from a commitment to responsible AI deployment, fearing that unrestrained government use could lead to scenarios where advanced AI systems are employed in unpredictable or harmful ways. His stance contrasts with the Defense Department's aggressive pursuit of technological advancements necessary for modern warfare.

In terms of operational specifics, the Pentagon's AI investments are part of a broader $4.6 billion push in the fiscal year 2024 to advance its Joint All-Domain Command and Control strategy. This includes the development of sophisticated machine learning algorithms and autonomous systems capable of decision-making in high-stakes environments. Yet, this technological arms race could morph into a crisis if leading developers opt to withdraw their support in light of ethical concerns, leading to gaps in military capabilities.

The immediate consequence could be a breakdown in trust between the government and AI developers, stalling key projects and pushing innovators towards non-military applications. As military planners seek to maintain technological superiority, they may escalate funding and pressure on AI firms, potentially igniting a political and public relations crisis. This scenario could deter collaboration and innovation in a field that is essential for ensuring readiness against rival powers.

Historically, similar tensions have emerged during the development of technologies like drones and cyber-warfare tools. The fallout from the Vietnam War, where ethical concerns led to a backlash against military research, echoes the current predicament, demonstrating that unresolved ethical debates can lead to significant operational limitations. If the Pentagon fails to navigate these waters, it risks repeating costly historical mistakes.

In the coming weeks, watch for further developments as the Pentagon may implement new policies in response to pressure from AI firms like Anthropic. Intelligence indicators to monitor include statements from key defense officials, changes in funding allocation for AI projects, and any collaborative agreements reached—or abandoned—between government and private sector partners. The outcome of this conflict could reshape the future of military AI and its ethical landscape.